RAG Movie Plots: Designing a Modular RAG System

Introduction

Retrieval-Augmented Generation (RAG) systems are often described as linear pipelines: load documents, split text, generate embeddings, store vectors, retrieve context and invoke a language model. This description is conceptually accurate, but architecturally incomplete. When presented as a sequence of steps, the structural decisions that shape system behavior remain implicit. Choices about segmentation, persistence, filtering and prompt construction interact in ways that are difficult to observe when everything is treated as a single workflow.

The RAG Movie Plots project was created as a response to this limitation. Instead of focusing solely on end-to-end optimization, the goal is to expose the decisions that build up across layers and make them visible. Each stage of the pipeline is implemented as an independent module that produces explicit artifacts, allowing the system to be studied as a set of interacting components rather than a black box. In this sense, the project serves less as an application and more as an environment for experimentation and investigation.

From Pipeline to System

Previous analyses explored structural behaviors in isolation, such as chunking constraints and boundary effects. One example is the observation that chunk overlap may silently fail under line-based segmentation, revealing how a seemingly simple configuration choice can reshape retrieval behavior. While useful, these investigations also exposed a broader limitation: understanding individual mechanisms does not necessarily explain system behavior once those mechanisms interact.

A complete RAG system connects data normalization, segmentation, vector representation, retrieval logic and prompt construction into a layered structure where early decisions propagate downstream. Examining components separately helps reveal constraints, but only a system-level perspective shows how they build up over time.

Treating RAG as a system rather than a pipeline shifts the focus from optimization to traceability. Instead of asking how to improve answers, the project explores how those answers take shape. Making intermediate states persistent and observable enables clearer reasoning about cause and effect across layers, reveals hidden dependencies and supports more deliberate design trade-offs.

Why the Wikipedia Movie Plots Dataset

The dataset was chosen for how the text is structured, not for the domain it represents. It is also a widely used Kaggle dataset with strong usability ratings, making it a reliable baseline for experimentation and comparison.

Movie plots display substantial variability: some are long and written as a single paragraph, while others are shorter, fragmented or contain irregular line breaks. These differences directly affect how text can be segmented without losing semantic continuity. Because of this variability, chunking can’t rely on configuration alone, it needs to reflect the data. Inspecting the structure before defining preprocessing strategies reduces guesswork and leads to more reliable retrieval behavior.

In this context, the dataset becomes a practical environment for experimentation. Chunking choices become observable, retrieval behavior becomes easier to interpret and prompt construction can be examined against realistic narrative continuity. Rather than simplifying the problem, the dataset exposes structural constraints, making design decisions measurable instead of hypothetical.

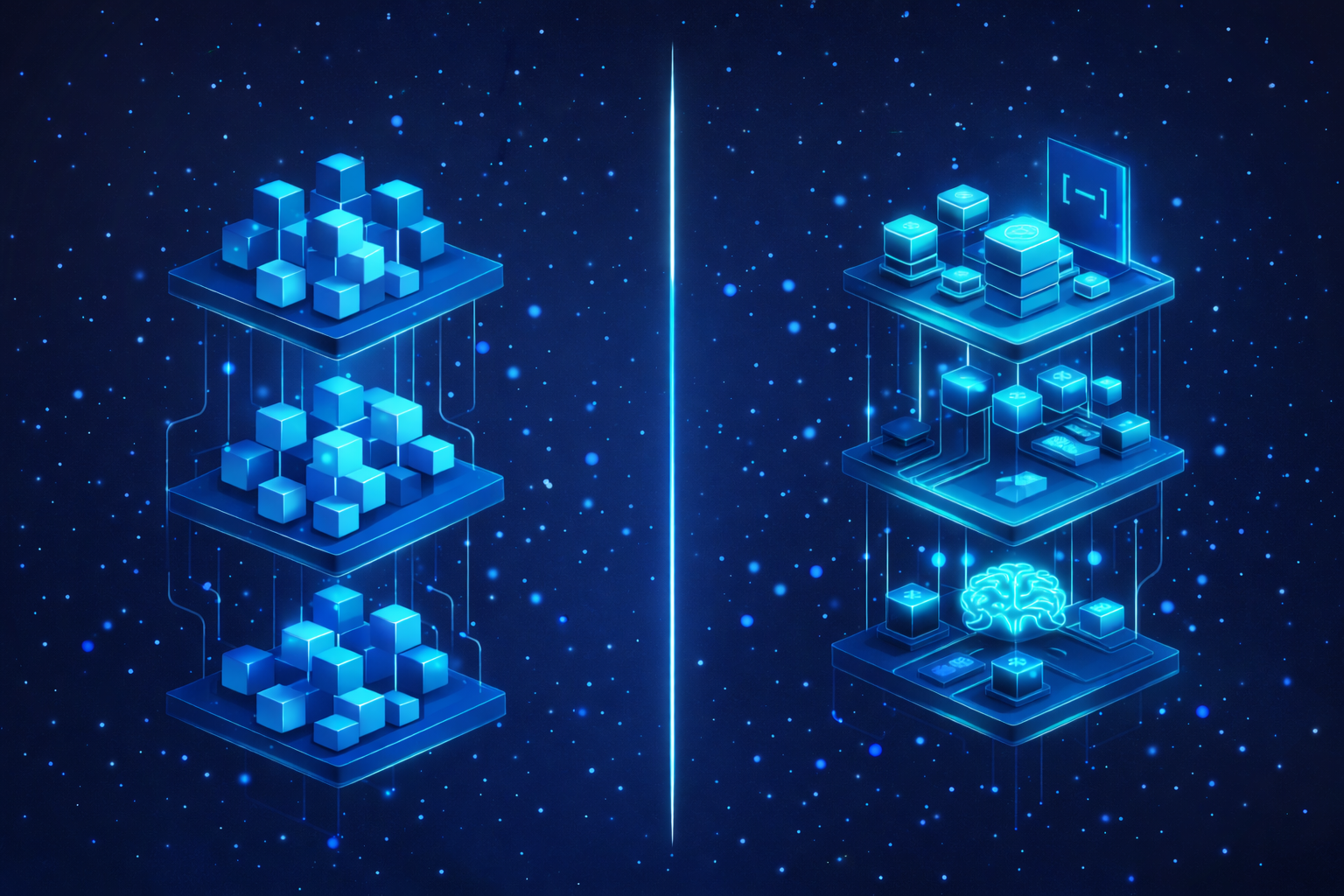

Architectural Separation

A central design decision of the project is the explicit separation between knowledge construction and knowledge usage. The system is divided into an offline ingestion phase responsible for producing structured semantic artifacts and an online phase responsible for retrieval and generation. This separation ensures that preprocessing decisions can be examined independently from runtime query behavior, allowing the impact of each layer to be understood in isolation.

By separating phases with a clear boundary, the architecture makes previously implicit dependencies explicit. Persisted artifacts become the contract between ingestion and querying, enabling reproducibility, inspection and controlled experimentation.

Offline Ingestion as Knowledge Construction

The ingestion phase transforms raw tabular data into a structured semantic representation that can later support retrieval. This process involves normalization, document generation, segmentation of narrative text, embedding creation and persistence into a vector store. Crucially, each step produces intermediate artifacts that remain accessible rather than transient.

At this stage, there is no generation yet, so the focus is on shaping the knowledge base rather than answering questions. Decisions about chunking strategy, metadata structure and storage format directly influence what the system will later be able to retrieve. Treating ingestion as knowledge construction emphasizes its architectural role and positions preprocessing as a primary design concern.

Online Retrieval and Generation as Interpretation

The runtime phase operates exclusively on persisted artifacts, using them to interpret user queries within a constrained context. Queries are encoded, semantically similar chunks are retrieved, contextual input is assembled and a structured prompt guides generation. Instead of prioritizing optimization, the phase establishes a transparent baseline that makes retrieval behavior observable and prompt constraints explicit.

This perspective shows that generation doesn’t create knowledge, it relies on what was structured earlier. Response quality reflects how previous layers shaped the semantic space, making upstream design decisions especially important.

Modularity as Methodology

The modular structure of the project is a deliberate design choicel, not just an implementation detail. By isolating ETL, chunking, embedding, retrieval and generation, each layer can be examined independently before evaluating the system as a whole. Modules communicate through artifacts rather than shared state, reducing hidden coupling and enabling targeted experimentation.

This approach allows questions about segmentation, retrieval filtering or prompt constraints to be investigated without conflating them. Modularity therefore becomes a mechanism for reasoning, making the architecture itself a research tool.

What This Series Explores

This post introduces the architectural foundation of the project. Subsequent posts examine each layer independently, including dataset structure, segmentation strategy, embedding persistence, retrieval behavior and prompt design. Studying layers in isolation makes it possible to draw system-level conclusions grounded in observable mechanisms rather than assumptions.

Future work in this series will explore how constraints propagate across modules and when modular design clarifies behavior versus when it oversimplifies it.

Series Context

This article establishes the architectural foundation of the RAG Movie Plots project. It defines the separation between offline ingestion and online retrieval and introduces modularity as a design principle rather than an implementation detail.

The broader goal of this series is to examine each layer independently, covering dataset structure, segmentation strategy, embedding persistence, retrieval behavior, and prompt construction, in order to draw system-level conclusions grounded in observable mechanisms rather than assumptions.

Subsequent articles move from architecture to data, beginning with a structural analysis of the dataset and its implications for segmentation and retrieval behavior.

Part of the RAG Movie Plots Series:

- Designing a Modular RAG System (current article)

- Understanding Narrative Structure Before Building RAG Systems

- From Structural Analysis to Chunking Decisions

Conclusion

RAG systems are often described as pipelines because pipelines are easy to explain. In practice, they behave as layered systems whose structural decisions accumulate over time. Understanding those layers is more valuable than tuning them blindly.

The RAG Movie Plots project treats architecture as something that can be inspected, questioned and refined. It is not a solution but an instrument, a way to observe how retrieval systems actually behave.

Project Repository

This analysis is part of the RAG Movie Plots project. The complete implementation including ingestion pipelines, chunking experiments, vector store configuration and retrieval logic is available on GitHub:

https://github.com/ilfncin/rag-movie-plots

Further Reading

Background materials related to RAG architecture and system design discussed in this post: